Julie R. Williamson (Papers Co-Chair 2022 and 2023)

Tldr;

- Categorical recommendations are a better representation of reviewers’ views and a better indicator of paper outcomes.

- Ordinal recommendations are a poor indicator of paper outcomes, and lean towards a negative view of papers even when reviewers recommend acceptance.

- Rigour is the most important criteria in paper success, followed by significance, followed by originality.

Reflecting on CHI2022

The only things that are certain are death, taxes, and optimistic volunteers trying to improve the CHI reviewing process. In 2022, we introduced a revise and resubmit process that allowed for major revisions. This came with a lot of uncertainty, but was driven by a conviction that this would lead to a “better” review process, published papers, and author experience.

I’m writing this post in my capacity as Papers Co-Chair for CHI 2022 and 2023. I’m a faculty member at the University of Glasgow in immersive technologies, but I have extensive experience volunteering for SIGCHI and ACM in publications roles. The goal of this post is to review the data from CHI 2022 and help make the CHI 2023 process more transparent and consistent. This summary should help reviewers and authors with information that didn’t exist when we started the revise and resubmit process in 2022. Once the CHI 2023 reviews are released, we’ll write a follow-up post with an updated analysis.

A significant change that came with revise and resubmit was the removal of the ordinal “scores” in favour of a categorical “recommendation.” I was keen to move away from decision making on the flawed premise of averaged ordinal data. We removed the asymmetric nine point scale (from 1 – I would argue for rejecting this paper to 5 – I would argue strongly for accepting this paper) and replaced it with a categorical recommendation.

Reviewers could indicate one of five options; Accept (A), Accept or Revise/Resubmit (A/RR), Revise/Resubmit (RR), Revise/Resubmit or Reject (RR/X), Reject (X). This isn’t a perfect solution, but I argue it is substantially better than what we had before. There are limitations: variation between reviewers as to what could realistically be achieved in a revise/resubmit cycle, and confusion with other review processes using different terms or the same terms differently. Although we removed the primary ordinal score, we did not remove all ordinal data. Inspired by ACM’s Guidelines for Pre-Publication Review, we included four-point ordinal scales for Originality, Significance, and Rigour (Low, Medium, High, Very High, another asymmetric scale).

Ironically, in this analysis, I have averaged ordinal data, expressed categories as ordinal scales, and normalised ordinal scales, along with other tricks commonly used. I’ll point out anytime I’ve done this, and in some cases it will highlight how flawed these common practices are. In other cases, I’ll just recommend accepting the limitations of this kind of analysis, which is an acceptable uncertainty for reflection, but not deciding the fate of papers!

Recommendation or Rating?

This post aims to answer a key question from 2022: did changing from a score to a recommendation improve the process for paper decisions? I believe the data says yes.

Figure 1 (left) shows a histogram of the 2022 ordinal responses (originality, significance, rigour) for each paper (averaged across reviewers), split it into accepted and rejected papers, and rescaled to a five point scale for comparison. These averaged ordinal scores data show bell curves with a relatively large overlap between accepted and rejected papers. Figure 1 (right) shows a histogram of the categorical recommendations (represented as ordinal values and averaged) for accepted and rejected papers. The categorical data results in two clearly separated distributions, with rejected papers in a steep distribution centred on 1, and accepted papers in a relatively flat distribution spreading from 2 to 5.

That “noisy middle” is where we hope to see the most important discussions during the review process, but I argue that the overlap shown in these figures represents two semantically different discussions. On the left, the overlap represents differences in opinion on how a paper scores on a subjective scale, that is “I think that this paper is only ‘high’ on a four point scale,” On the right, the overlap represents differences in opinion on the preferred outcome of the paper, that is “I think this paper should be accepted.” I would argue that the overlap created by ordinal data is more representation of noise than overlap created by categorical data. This is important, as we’ll see in the next section!

Another issue highlighted by these visualisations is that the CHI community has a problem with negativity: on ordinal scales we don’t rate highly the papers we like and we don’t crush the papers we dislike. I couldn’t say whether this is simple bias towards the centre, or more complex social desirability bias, but the distributions above show that the ordinal data is a poor representation of what a reviewer means by their scores. Let’s unpack that further.

What do Reviewers Mean?

A commonly understood issue with ordinal scales is that the numbers on the scales mean different things to different people. In survey research, that measurement error is solved by carrying the difference over a large N. For any given paper we don’t have a large N. Within the CHI community, a common anecdotal argument for not implementing a fully anonymous review process is how “valuable” it is for the committee to see the reviewer names, for example “I know Julie always gives too high of a score so we should really be looking at this like it’s not that positive of a review.” This is the stuff of nightmares when we want a consistent and transparent review process.

The data confirms that reviewer recommendations vary widely when compared to the ordinal scores they give papers. There is a trend towards a lower score as reviewer recommendation is more negative, but the spread within a recommendation is substantial. Some reviewers gave ordinal scores as low as 2.1 when recommending accept, while others gave scores as high as 4.2 while recommending reject, and everything in-between.

This data confirms the semantic difference between the overlap in Figure 1 left and Figure 1 right. I would argue that the ordinal scores are only useful and reflective of reality when set alongside the categorical recommendation, and decisions should be made based on the categorical recommendation as the primary criteria. For 2023, we’ve improved how the categories will be viewable in aggregate in PCS, allowing subcommittees to sort more easily by category during the PC meeting.

What are the Most Important Criteria?

Moving away from a single ordinal scale toward multiple scales based on different criteria also gives us some insights into which criteria most impact the success of a paper. Some common questions the CHI community has grappled with: Is CHI too fixated on originality? Will a paper with low rigiour but a cool idea still be accepted? Do we not care enough about rigour?

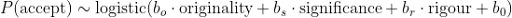

To address this question, we performed a Bayesian logistic regression to estimate the strength of the (unnormalised) ordinal scores in predicting the ultimate accept/reject outcome. This model had the form:

where the coefficient bo, bs, br and b0 were estimated. Using very weakly informative priors on the coefficients, we fitted the model with pymc3. A forest plot of the estimates is shown in Figure 3. All of the ordinal scores have some predictive power, but rigour has a substantially larger effect than significance or originality on the probability of a paper being accepted.

Many thanks to John Williamson who completed the logistic regression analysis for the criteria scales.

A successful paper will generally score well across all criteria, but the strongest predictor of success is rigour, followed by significance, followed by originality. This opens up some interesting questions, as the values associated with these criteria will vary broadly across different subcommittees and communities of practice. For 2023, we’ll continue using these criteria on the review form with some minor changes to standardise these scales to symmetric 5 point scales.

Categorical Decisions and Paper Outcomes

The proportion of reviewers who are positive about a paper in terms of categorical recommendation is the best indicator of paper outcomes. Figure 4 shows the number of papers that were Accepted or Rejected based on the proportion of reviewers who recommended revise and resubmit or better in 2022. About half of the submissions in 2022 had no reviewer recommending revise and resubmit or better. Not a single paper in this category was ultimately accepted in 2022.

Based on this data, we set the threshold for revise and resubmit in 2023: at least one reviewer or 1AC must recommend revise and resubmit or better for authors to have the opportunity to revise and resubmit. This means any single reviewer or AC can “save” a paper even if all others recommend reject. But it’s clear that a greater proportion of positive reviewers leads to a higher chance of success in the revise and resubmit cycle. In 2022, if only one reviewer was positive, it’s very likely your paper was ultimately rejected. If most reviewers were positive, it’s very likely your paper was accepted. When authors receive their reviews in November 2023, if they meet the threshold for revise and resubmit they must also consider how positive their reviews are when determining if they want to go through the revision cycle.

Open Questions

To conclude, I’ll leave some open questions that I think this data exposes and that will be interesting to reflect on as we enter the CHI 2023 review process.

Does a categorical recommendation instead of an ordinal score lead to a better decision process? I believe the data says yes. The recommendation is a better indicator of reviewers’ views when compared with the ordinal scales, and both authors and programme committees can have more consistent expectations going into the PC meeting using categorical recommendations for decisions. There is still an open concern that before reviews are released, it can be hard to predict which papers will be successful. Changing the review form won’t fix this, but I hope the reducing noise in the decision process is a step in the right direction.

What makes a rigorous paper? The strongest predictor of paper success was rigour, but it is clear that what rigour means and how it is assessed will vary widely across the subcommittees and different communities of practice. Beyond the ordinal scales, we don’t have review process data or programme metadata that would give us further insights on rigour. One approach I would like to see is incorporating artefact metadata into conference proceedings, and as a community exploring the wide range of artefacts that underpin our work.

Does Revise and Resubmit lead to better papers? In this analysis, I didn’t look at how papers changed after revise and resubmit, or any broader metrics of “quality” with respect to the final programme. This is a more complex issue than analysing ordinal data can achieve, but it’s something we should be reflecting on in the revise and resubmit process.

Notes

The data is by definition incomplete because submissions conflicted with me are not included. Totals will not add up to some published numbers for this reason. Thanks to my co-chairs in 2022, 2023, and the volunteers who provided feedback on this work.

Stefanie Mueller, Julie R. Williamson, Max Wilson

CHI 2023 Papers Chairs

Steven Drucker, Julie R. Williamson, Koji Yatani

CHI 2022 Papers Chairs

Scales

CHI 2021 “Score” Scale

Strong Accept: I would argue strongly for accepting this paper; 5.0

. . . Between possibly accept and strong accept; 4.5

Possibly Accept: I would argue for accepting this paper; 4.0

. . . Between neutral and possibly accept; 3.5

Neutral: I am unable to argue for accepting or rejecting this paper; 3.0

. . . Between possibly reject and neutral; 2.5

Possibly Reject: The submission is weak and probably shouldn’t be accepted, but there is some chance it should get in; 2.0

. . . Between reject and possibly reject; 1.5

Reject: I would argue for rejecting this paper; 1.0

CHI 2022 Ordinal Scales (Originality, Significance, Rigour)

Very High

High

Medium

Low

Data Tables

These tables provide a numerical representation for Figures in this post.

Figure 1 Left (Ordinal Scores)

Figure Description: Histogram of paper scores averaged from the ordinal scales and normalised onto 5 point scale. Separated by accepted and rejected papers, resulting bell curves overlap between 2.5 and 3.5. See data table:

| Bin Right Edge (Accepted Papers) |

Count (Accepted Paper) |

Bin Right Edge (Rejected Papers) |

Count (Rejected Papers) |

|---|---|---|---|

| 2.45 | 14 | 1.51 | 24 |

| 2.71 | 26 | 1.77 | 63 |

| 2.97 | 59 | 2.03 | 224 |

| 3.23 | 72 | 2.29 | 284 |

| 3.49 | 68 | 2.55 | 500 |

| 3.75 | 104 | 2.81 | 299 |

| 4.01 | 54 | 3.07 | 269 |

| 4.27 | 56 | 3.33 | 63 |

| 4.53 | 66 | 3.59 | 25 |

| 4.79 | 93 | 3.85 | 2 |

Figure 1 Right (Categorical Recommendation)

Figure Description: Histogram of paper scores from recommendation category represented as a 5 point scale. Separated by accepted and rejected papers, rejected papers have a steep falloff from 1 to 3.5, accepted papers have a relatively flat distribution from 2 to 5. See data table:

| Bin Right Edge (Accepted Papers) |

Count (Accepted Paper) |

Bin Right Edge (Rejected Papers) |

Count (Rejected Papers) |

|---|---|---|---|

| 2.3 | 14 | 1.25 | 392 |

| 2.6 | 26 | 1.5 | 397 |

| 2.9 | 59 | 1.75 | 349 |

| 3.2 | 72 | 2.0 | 276 |

| 3.5 | 68 | 2.25 | 148 |

| 3.8 | 104 | 2.5 | 100 |

| 4.1 | 54 | 2.75 | 44 |

| 4.4 | 56 | 3.0 | 38 |

| 4.7 | 66 | 3.25 | 10 |

| 5 | 93 | 3.5 | 8 |

Figure 2 (Boxplot)

Figure description: Boxplot of reviewer ordinal responses grouped by categorical recommendation. Plot shows significant overlap between all categories, with a downward trend in median from accept to reject. See data table:

| Accept | Accept or RR | RR | RR or Reject | Reject | |

|---|---|---|---|---|---|

| Max | 5.0 | 5.0 | 5.0 | 5.0 | 4.1 |

| Upper Quartile | 4.2 | 3.8 | 3.3 | 2.9 | 2.5 |

| Median | 3.8 | 3.3 | 2.9 | 2.5 | 2.1 |

| Lower Quartile | 3.3 | 2.9 | 2.5 | 2.5 | 1.7 |

| Min | 2.1 | 2.1 | 1.25 | 1.25 | 1.25 |

Figure 3 (Forest Plot)

Figure Description: Forest plot shows relative predictive power of ordinal scales for paper success. Rigour has the strongest predictive power, following by significance, following by originality. See data table:

| Median | HDI 3% | HDI 97% | |

|---|---|---|---|

| Originality | 1.305 | 0.972 | 1.650 |

| Significance | 2.163 | 1.797 | 2.524 |

| Rigour | 2.690 | 2.364 | 3.016 |

Figure 4 (Bar Chart)

Figure Description: Bar chart showing proportion of reviewer with favourable recommendation grouped by paper outcome as Accept or Reject. The greater proportion of favourable reviews, to greater likelihood of eventual acceptance. See data table:

| Accept | Reject | |

|---|---|---|

| 0% | 0 | 1151 |

| 25% | 9 | 449 |

| 50% | 52 | 137 |

| 75% | 175 | 21 |

| 100% | 379 | 5 |